In the age of the ransomware attacks having a current backup is paramount. But can you have an easy backup procedure and be sure that, even in the event of the full backup server compromise your data is not lost? While there are many backup tools and vendors out there that promise it (e.g. velero/restic/duplicity, see the referecence section belo), they are complicated and/or proprietary, requiring you to trust whoever is providing it.

I prefer simple, time-tested backup solutions. Here is the fully end-to-end encrypted backups using nothing but bash, rsync and ssh. It supports:

- untrusted remote storage

- multiple clients backing up to one server

- backup partitions that grow and shrink as needed

- fully auditable storage

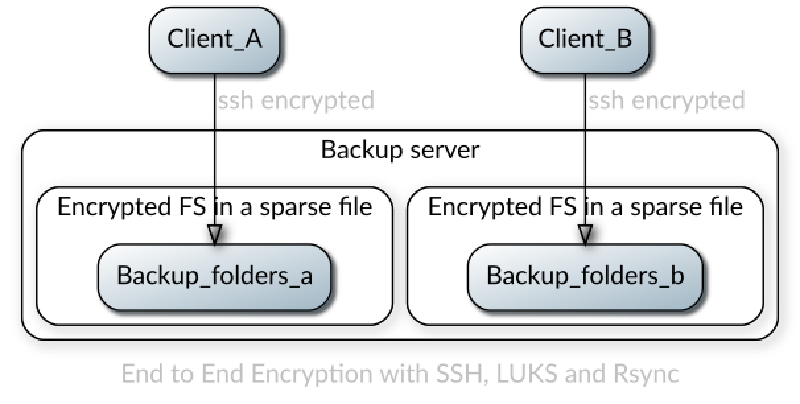

The way it works is like this:

- The remote server has a plain partition that stores one or more sparse files. The files contain LUKS encrypted partitions inside with the backups

- Before each backup the client mounts the plain partition over SSH, then opens the encrypted file and mounts the partition from inside of it

- It backs up the data and performs unmounting in the reverse order

- The remote server never sees the unencrypted data since SSH is providing encryption in transit and LUKS is providing encryption at rest.

Implementing E2EE backups

There are three steps in this process:

- Create an SSH connection to the server

- Create an encrypted file for the backups on the server

- Mount the encrypted file over SSH into the local file system

If you’d like to see it fully implemented and automated check my SARDELKA project.

-

Create SSH keys on the local system:

ssh-keygen -t ed25519 -f ~/.ssh/backupuser_ed25519 -

Create a backup user on the remote system

sudo useradd -m -s /bin/bash backupuser

sudo mkdir ~backupuser/.ssh/

sudo touch ~backupuser/.ssh/authorized_keys

sudo chown -R backupuser:backupuser ~backupuser/.ssh/

sudo chmod 700 ~backupuser/.ssh/

sudo chmod 600 ~backupuser/.ssh/authorized_keys

Copy the contents of the ~/.ssh/backupuser_ed25519.pub from the local system to ~backupuser/.ssh/authorized_keys of the remote system

Test that you can login as backupuser to the remote system. To make it easier in the future add the following to your .ssh/config:

Host remotebackupserver

HostName backupserver

User backupusedr

PubkeyAuthentication yes

IdentityFile ~/.ssh/backupio_ed25519

-

Configure FUSE to allow root to operate on user mounts. This is needed because cryptsetup has to run as root to create /dev/mapper devices. To do this uncomment

user_allow_otherin/etc/fuse.conf -

Mount the remote file system over SSHFS

sudo sshfs -o allow_other remotebackupserver:/path/to/backup/ /mnt/backup-host -

Create a sparse file that will hold your backups, indicating the max size you’ll want it to grow to (which could also be increased later).

truncate -s 5T /mnt/backup-host/backups-system-a.luks -

Setup LUKS encryption inside of this file with a new key-file

sudo dd if=/dev/random of=/root/.keyfiles/backupstore.key bs=4096 count=1

sudo chmod 600 /root/.keyfiles/backupstore

sudo cryptsetup luksFormat /mnt/backup-host/backups-system-a.luks --key-file /root/luks/backupstore.key

- Create normal partition inside of the encrypted file. Initialize the whole FS right now to avoid performance issues later with the lazy init.

sudo cryptsetup luksOpen /mnt/backup-host/backup-file.luks --key-file /root/luks/backupstore.key backup-partition

sudo mkfs.ext4 -m0 -E lazy_itable_init=0,lazy_journal_init=0 /dev/mapper/backup-partition

sudo mount /dev/mapper/backup-partition /mnt/remote-backup

Backup

Now you can start backing up your system to /mnt/remote-backup as if it was a local trusted file system. For example if you want to run something like a synthetic full backup run

sudo rsync -vaH --numeric-ids --delete --delete-excluded --stats --log-file system.log --log-file-format="%i %10l %n %L" --progress --prune-empty-dirs --link-dest=/mnt/remote-backup/home --exclude-from system.exclude /home/ /mnt/remote-backup/home.<date>

Teardown

After the backup is done use these steps:

sudo umount /mnt/remote-backup

sudo cryptsetup luksClose /dev/mapper/backup-partition

sudo fusermount -u /mnt/backup-host

Maintenance

Moving backups

If you ever need to move your backup sparse file use cp --sparse=always or sudo dd if=backups-system-a.luks of=otherfolder/backups-system-a.luks bs=64K conv=sparse. 64k is chosen to limit ‘bloat’ during dd copying (file size increase from the original).

Increasing backup file size

Use truncate and resize2fs

sudo sshfs -o allow_other remotebackupserver:/path/to/backup/ /mnt/backup-host

truncate -s 8T /mnt/backup-host/backup-file.luks

sudo cryptsetup luksOpen /mnt/backup-host/backup-file.luks --key-file /root/luks/backupstore.key backup-partition

sudo resize2fs /dev/mapper/backup-partition

Decreasing backup file size

The size of the sparse filed does not automatically decrease when you are deleting files from the backups. To do this you need a way to run the filesystem TRIM/Discard commands. However, SSHFS/SFTP does support TRIM, so you’ll need to temporarily mount it over NBD. Note that although NBD supports TLS setting it up is a chore for this purpose, so here is the plain-text example that does not provide is not encrypted in transit. Make sure to use it ONLY for the trim command and nothing else.

- Get the NBD server and client

apt-get install nbd-server nbd-client - Enable trim in the config file:

[default]

exportname = /mnt/backup-host/backup-file.luks

trim = true

- Start the NBD server

sudo nbd-server -d -C /path/to/config - Connect to it from the client

nbd-client -N default -n backup-server 10809 /dev/nbd0 - Open the LUKS file with the

--allow-discardsoption:sudo cryptsetup luksOpen --allow-discards /dev/nbd0 backup-mapper - Mount it

sudo mount /dev/mapper/backup-mapper /mnt/remote-backup - Trim the deleted space:

fstrim -v /mnt/remote-backup - Tear everything down

Notes

There is still a chance for the local computer to corrupt/delete the remote backups if the local computer is compromized. Here are some ideas on how to fix that:

- Use encrypted rsync server/client connection over with full synthetic backups (see SARDELKA ) as you will not be able to delete/corrupt already existing backups that way.

- Use append-only/write-only backups with restic and rclone.

- Use an append-only FS in the backup file